- ZFS can maintain data redundancy through a sophisticated system of multiple disk strategies. These strategies include mirroring and the striping of mirrors equvalent to traditional RAID 1 and 10 arrays but also includes “RaidZ” configurations that tolerate the failure of one, two or three member disks of a given set of member disks.

- An absolutely killer feature of ZFS is the ability to add compression with little hassle. As we turn into 2018, there is an obvious new year’s resolution: use ZFS compression. Combined with sparse volumes (ZFS thin provisioning) this is a must-do option to get more performance and better disk space utilization.

- The Dom0 hosts the zfs file system and VMS are allocated storage as needed. Another option is to use iSCSI or even samba to mount your zfs filesystem in windows vm.

- 1Using ZFS Storage Plugin (via Proxmox VE GUI or shell)

- 2Misc

- 2.3Example configurations for running Proxmox VE with ZFS

- 3Troubleshooting and known issues

Efficient local or remote replication — send only changed blocks with ZFS send and receive Contributing to OpenZFS. The OpenZFS project brings together developers from the Linux, FreeBSD, illumos, MacOS, and Windows platforms. OpenZFS is supported by a wide range of companies. There are many ways to contribute to OpenZFS including.

Using ZFS Storage Plugin (via Proxmox VE GUI or shell)

After the ZFS pool has been created, you can add it with the Proxmox VE GUI or CLI.

Adding a ZFS storage via CLI

To create it by CLI use:

Adding a ZFS storage via Gui

To add it with the GUI:Go to the datacenter, add storage, select ZFS.

Misc

QEMU disk cache mode

If you get the warning:

or a warning that the filesystem do not supporting O_DIRECT, set the disk cache type of your VM from none to writeback.

LXC with ACL on ZFS

ZFS uses as default store for ACL hidden files on filesystem.This reduces performance enormously and with several thousand files a system can feel unresponsive.Storing the xattr in the inode will revoke this performance issue.

Modification to do

Warning: Do not set dnodesize on rpool because GRUB is not able to handle a different size.see Bug entry https://savannah.gnu.org/bugs/?func=detailitem&item_id=48885

Example configurations for running Proxmox VE with ZFS

Install on a high performance system

As of 2013 and later, high performance servers have 16-64 cores, 256GB-1TB RAM and potentially many 2.5' disks and/or a PCIe based SSD with half a million IOPS. High performance systems benefit from a number of custom settings, for example enabling compression typically improves performance.

- If you have a good number of disks keep organized by using aliases. Edit /etc/zfs/vdev_id.conf to prepare aliases for disk devices found in /dev/disk/by-id/ :

Use flash for caching/logs. If you have only one SSD, use parted of gdisk to create a small partition for the ZIL (ZFS intent log) and a larger one for the L2ARC (ZFS read cache on disk). Make sure that the ZIL is on the first partition. In our case we have a Express Flash PCIe SSD with 175GB capacity and setup a ZIL with 25GB and a L2ARC cache partition of 150GB.

Zfs Windows Server

- edit /etc/modprobe.d/zfs.conf to apply several tuning options for high performance servers:

- create a zpool of striped mirrors (equivalent to RAID10) with log device and cache and always enable compression:

Windows Zfs Server

- check the status of the newly created pool:

Using PVE 2.3 on a 2013 high performance system with ZFS you can install Windows Server 2012 Datacenter Edition with GUI in just under 4 minutes.

Troubleshooting and known issues

ZFS packages are not installed

If you upgraded to 3.4 or later, zfsutils package is not installed. You can install it with apt:

Grub boot ZFS problem

- Symptoms: stuck at boot with an blinking prompt.

- Reason: If you ZFS raid it could happen that your mainboard does not initial all your disks correctly and Grub will wait for all RAID disk members - and fails. It can happen with more than 2 disks in ZFS RAID configuration - we saw this on some boards with ZFS RAID-0/RAID-10

Boot fails and goes into busybox

If booting fails with something like

is because zfs is invoked too soon (it has happen sometime when connecting a SSD for future ZIL configuration). To prevent it there have been some suggestions in the forum.Try to boot following the suggestions of busybox or searching the forum, and try ONE of the following:

a) edit /etc/default/grub and add 'rootdelay=10' at GRUB_CMDLINE_LINUX_DEFAULT (i.e. GRUB_CMDLINE_LINUX_DEFAULT='rootdelay=10 quiet') and then issue a # update-grub

b) edit /etc/default/zfs, set ZFS_INITRD_PRE_MOUNTROOT_SLEEP='4', and then issue a 'update-initramfs -k 4.2.6-1-pve -u'

Snapshot of LXC on ZFS

If you can't create a snapshot of an LXC container on ZFS and you get following message:

you can run following commands

Now set /mnt/vztmp in your /etc/vzdump.conf for tmp

Replacing a failed disk in the root pool

Glossary

- ZPool is the logical unit of the underlying disks, what zfs use.

- ZVol is an emulated Block Device provided by ZFS

- ZIL is ZFS Intent Log, it is a small block device ZFS uses to write faster

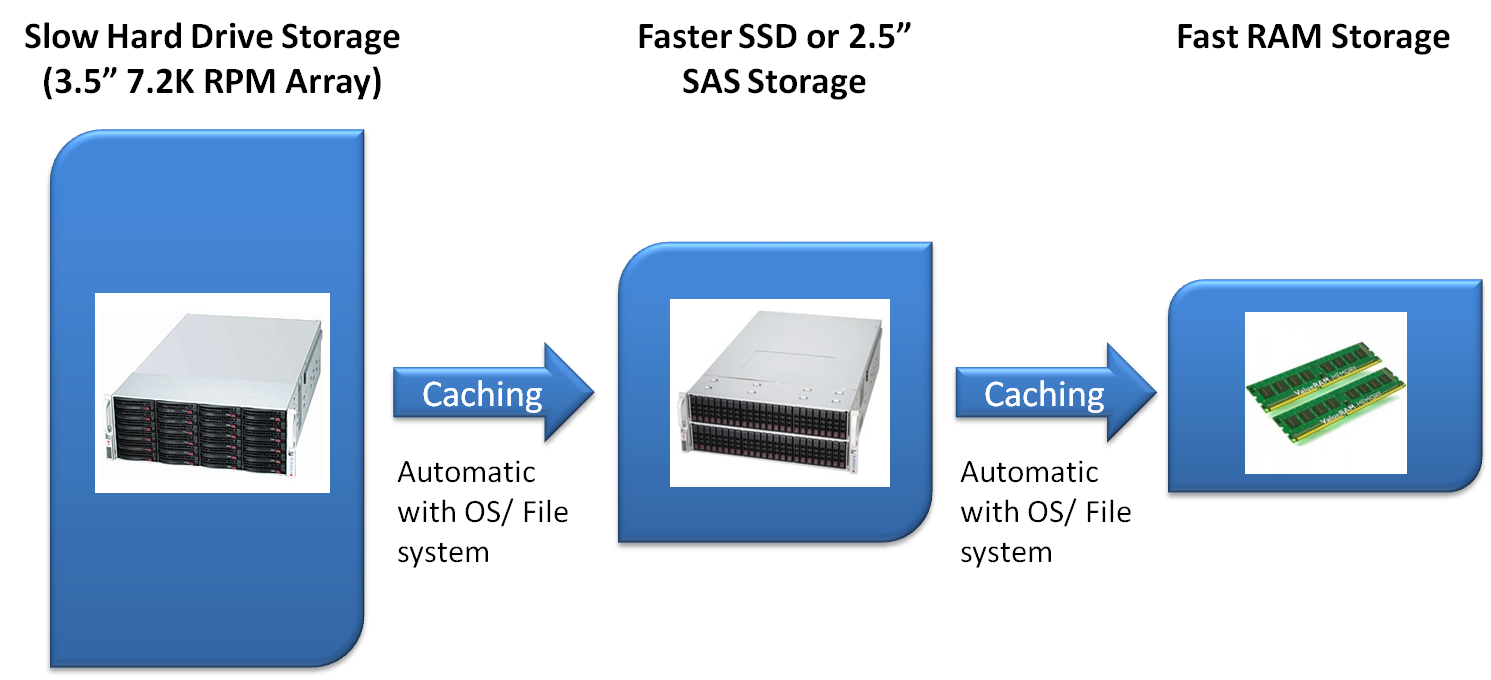

- ARC is Adaptive Replacement Cache and located in Ram, its the Level 1 cache.

- L2ARC is Layer2 Adaptive Replacement Cache and should be on an fast device (like SSD).

Further readings about ZFS

- https://www.freebsd.org/doc/handbook/zfs.html (even if written for freebsd, of course, I found this doc is extremely clear even for less 'techie' admins [note by m.ardito])

- https://pthree.org/2012/04/17/install-zfs-on-debian-gnulinux/ (and all other pages linked there)

and this has some very important information to know before implementing zfs on a production system.

Windows Zfs Alternative

Very well written manual pages

See also